Lederman surveys the relationship between two notions: common knowledge (the formal, iterated concept) and public information (the informal, intuitive concept), and asks whether they really are coextensive, as the literature has mostly assumed. Lederman’s central motivation is that their equivalence is a load-bearing assumption across multiple literatures, yet it has been taken for granted rather than argued for; Lederman attempts a clearing of the field by subjecting this assumption to sustained scrutiny.

Two Notions of Common Knowledge

Lederman distinguishes two concepts that the literature often conflates.

- Public information is the intuitive, informal notion drawn from Jane Heal.

- For example, two diners quarrel loudly in a restaurant, and everyone present can hear it, and can see that everyone else can hear it too. The information is conspicuously visible and therefore public.

- Common knowledge is the formal, iterated notion: a group commonly knows p iff they all know p, they all know that they all know p, and so on ad infinitum.

These look like they could come apart: one is an intuitive feature of situations (openness, visibility), the other is a formal property of epistemic states (infinite iteration). Heal’s paper is arguably where the situational intuition gets its sharpest formulation; Lederman is asking whether the formal apparatus that was subsequently built to capture that intuition actually vindicates it, or subtly transforms it. The literature has overwhelmingly treated the two as equivalent.

The Default Position: public information and common knowledge are treated as equivalent, two descriptions of the same phenomenon.

Lederman calls this the Default Position: public information just is (or necessarily produces) common knowledge. The formal notion gets deployed across game theory, convention theory, linguistic pragmatics, and shared intention as though it captures what we mean by information being “out in the open.” If the two notions come apart, those applications may be misgrounded.

An Argument for the Default Position

Lederman reconstructs an inductive argument for the Default Position through a series of scenarios involving a professor and students playing a coordination game (if the entire class writes down the same US state, they win a prize).

The key concept here is mutual knowledge: level 1 mutual knowledge is when everyone knows p, level 2 mutual knowledge is when everyone knows that everyone knows p, level 3 is when everyone knows that everyone knows that everyone knows p, and so on. Mutual knowledge that doesn’t extend indefinitely falls short of common knowledge, which requires infinite levels of nested knowledge.

Lederman’s strategy is to start with a clear case of public information and then progressively strip away the “openness” while preserving increasingly high levels of mutual knowledge. At each step, we get a situation where everyone shares more meta-knowledge than before, yet the information still doesn’t feel public.

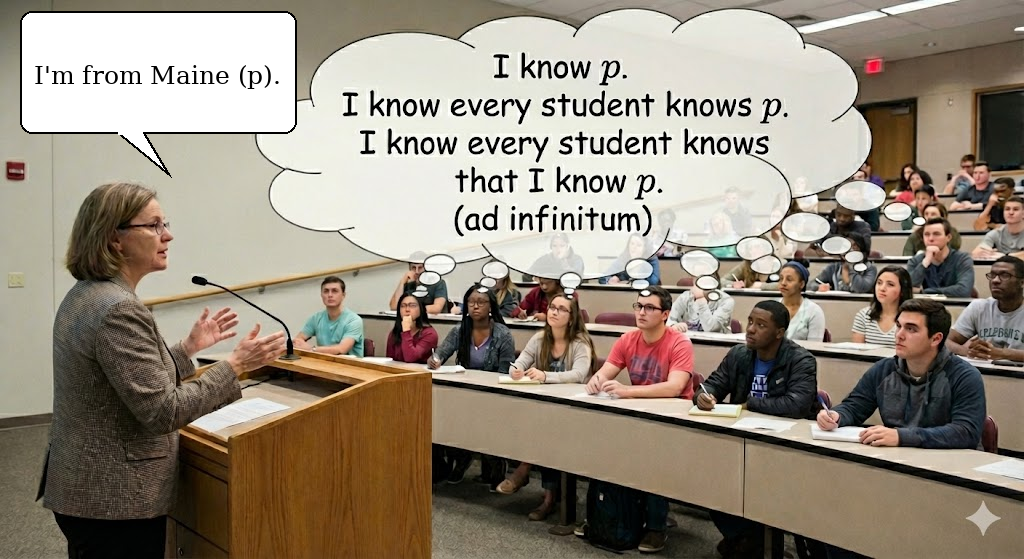

Public Announcement (Every Level)

This is the baseline. The professor announces to the class in a shared environment that she grew up in Maine. Everyone hears it, and everyone can see that everyone else heard it. Therefore, coordination on “Maine” is natural. This is unambiguously a case of both public information and of common knowledge (mutual knowledge at every level).

Public announcement: the professor tells the whole class. Everyone hears p, and everyone can see that everyone else heard it. This is the baseline case: both public information and common knowledge.

Public announcement: the professor tells the whole class. Everyone hears p, and everyone can see that everyone else heard it. This is the baseline case: both public information and common knowledge.

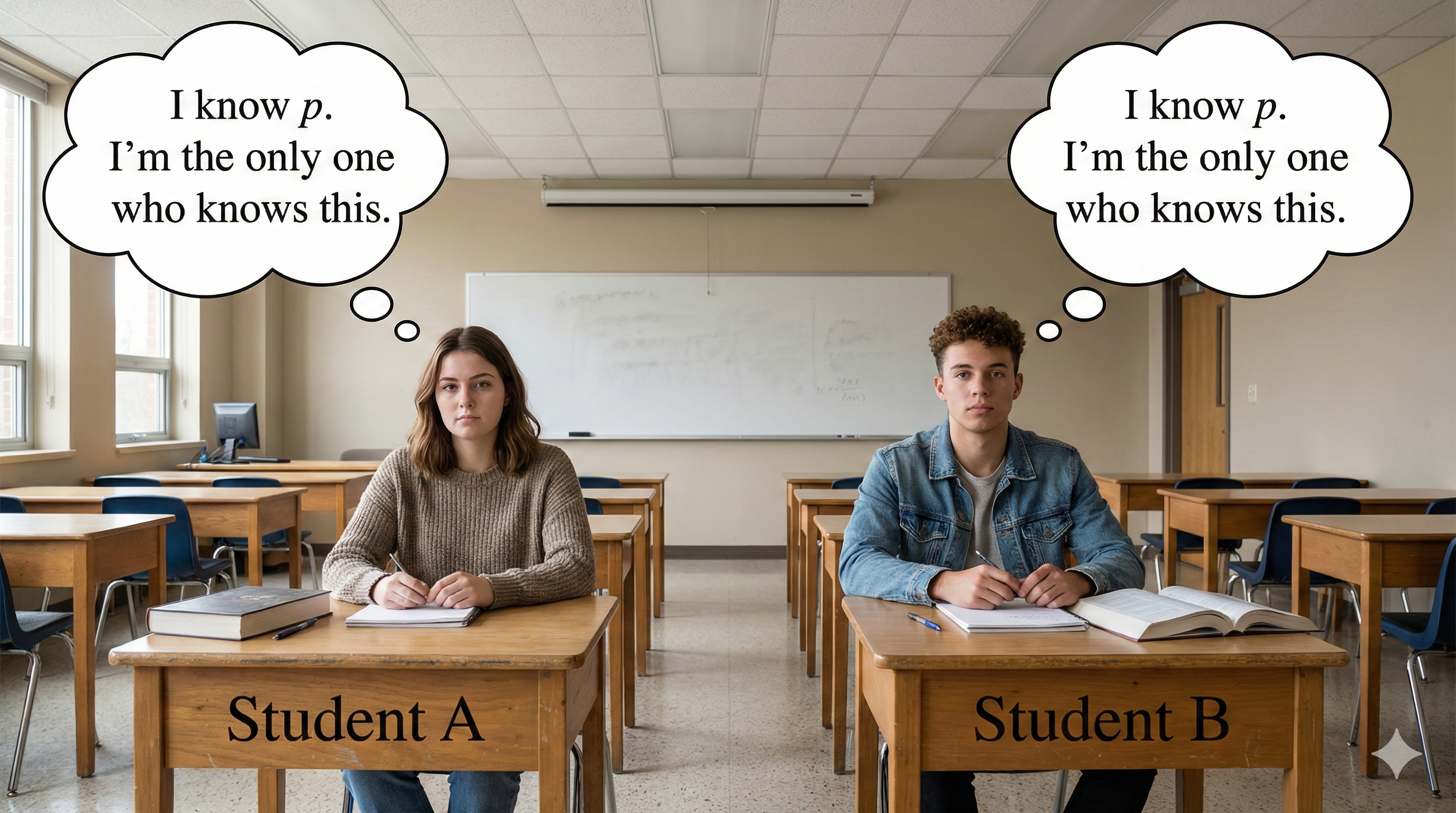

Private Information (Level 1)

The professor tells each student privately, “I grew up in Maine and you’re the only person I’m telling this to.” Thus, every student knows p (mutual knowledge level 1), but each thinks they’re the only one who knows. Therefore, coordination collapses, there’s no openness. This illustrates how mere universal knowledge is necessary but not sufficient for public knowledge.

Private disclosure: the professor tells each student individually, assuring each they’re the only one who knows p. Universal knowledge without openness; mutual knowledge at level 1 only.

Private disclosure: the professor tells each student individually, assuring each they’re the only one who knows p. Universal knowledge without openness; mutual knowledge at level 1 only.

Higher Levels (Levels 2, 3, …)

This same structure generalizes to any finite level. At each stage, the professor can manufacture one more layer of mutual knowledge through private disclosure, whispering to each student that she’s told everyone about the previous layer, while falsely assuring each student that they alone are receiving this meta-disclosure. The result is the same every time: another level of mutual knowledge is achieved, but openness never appears, because the next layer up remains private. True epistemic openness cannot be assembled merely by stacking individual cognitive states.

The Inductive Conclusion

No finite level of mutual knowledge suffices for public information. Since common knowledge is the infinite limit (what you get when every finite level holds), this motivates the Default Position: public information requires common knowledge.

Discussion: A Tension in the Argument

The inductive argument actually proves two things at once, and the question is whether they can be pulled apart.

The negative result is solid: no finite level of mutual knowledge is enough for public information. The cases show this clearly: level 1, level 2, level 3, none of them recover openness. Nobody disputes this.

The positive conclusion is the leap: therefore, public information requires common knowledge (the infinite limit). This only follows if you assume the reason those cases lack openness is specifically because they’re missing the next level of mutual knowledge. But there’s another explanation: every case in the sequence involves systematic deception through private channels. A professor whispering elaborate layered lies to each student individually is not a shared perceptual environment with one level stripped away; it’s a fundamentally different kind of situation. What’s missing is not the next level of meta-knowledge but the shared environment itself.

If that’s correct, the right analysis of public information might not be about levels of knowledge at all, but about the kind of situation you’re in: a shared perceptual environment where things are out in the open, versus privately mediated information where agents have no access to each other’s epistemic positions. That’s a fundamentally different kind of explanation. Lederman flags this as a live option but notes it hasn’t been developed. And it connects directly to the Shared Environment mechanism examined next.

Problems with the Default Position?

The previous section asked whether there’s a good argument for the Default Position and found the best one wanting. This section flips the question: what about the standard argument against it?

The obvious objection is that common knowledge requires knowing infinitely many things, which finite agents can’t do. This worry leads most authors to retreat to a weaker thesis, Ideal Common Knowledge: necessarily, if ideal agents had public information that p, they would commonly know p. This doesn’t claim real humans achieve common knowledge, only that public information and common knowledge are connected in principle under idealized conditions.

The real interest of this section is the mechanism the literature developed to support Ideal Common Knowledge. The Shared Environment analysis (drawing on Lewis, Barwise, Clark & Marshall) says: agents have public information that p when there is some state of affairs E, a shared environment, such that:

- Some state of affairs E obtains

- E entails public information that p

- E entails that everyone knows E

The crucial feature is (3): the environment is self-advertising. It’s the kind of thing that, when it obtains, everyone can tell it obtains. Think of Heal’s quarreling diners isn’t just an event that everyone perceives; it’s an event that everyone can see everyone perceives, because the shared perceptual environment makes this transparent. The quarrel doesn’t just produce the information; it produces the publicity of the information.

Condition (3) loops back on itself: E entails that everyone knows E, so each level of mutual knowledge generates the next. For ideal agents, the iteration goes all the way up.

Discussion: Where Does the Explanatory Work Actually Live?

As we saw, the induction’s failure cases lack openness because they lack a shared environment. The Shared Environment analysis is supposed to cash out that intuition. But notice where the explanatory weight falls. Condition (3), that E entails everyone knows E, is ultimately a fact about agents’ sensory capacities and their spatial relation to each other. The environment is self-advertising because of what agents can perceive and infer from their position in it.

This doesn’t undermine the account; nobody in this tradition claimed it would be entirely agent-free. The point was always to relocate the explanatory burden: the environment’s structure does the iterative work instead of agents explicitly reasoning through levels. But condition (3) makes it harder than it initially looks to draw a clean line between “situational” and “epistemic” explanations. The environment does its work through agents’ epistemic relations to it, which means the boundary between the two kinds of explanation is less stable than the initial distinction suggests.

Conclusion

Lederman’s upshot is deflationary: the relationship between common knowledge and public information is far less settled than the literature assumes. The Default Position has been taken for granted rather than argued for, and the best argument in its favor (the induction from the second section) has a gap between its negative and positive components. The weaker Ideal Common Knowledge thesis is more defensible, but even it has begun to face challenges. (Lederman also examines the connection between common knowledge and rational coordination through Rubinstein’s Electronic Mail Game and the notion of common p-belief, which suggest that weaker probabilistic notions may better capture what real-world coordination actually requires.)

Lederman emphasizes the downstream stakes: many philosophical projects have common knowledge baked in as a load-bearing assumption. Lewis’s account of convention, Stalnaker’s analysis of linguistic common ground, and accounts of shared intention all depend on the connection between publicness and common knowledge. If that connection is shakier than assumed, these theories inherit the instability. Lederman highlights linguistic common ground as especially pressing: speakers update their common ground in real time during conversation, which means any adequate account needs to explain how common knowledge gets generated and updated dynamically, not just how it could exist in principle.

Discussion: A Question for the Class

Is the right account of publicness ultimately individualistic (a matter of what agents know about what other agents know, iterated upward) or situational (a matter of being in the right kind of shared environment)? The Shared Environment analysis suggests the latter, and the tension in the inductive argument pushes in the same direction. But as the Shared Environment analysis showed, the boundary between the two is less stable than it initially appears; the situational account depends on epistemic conditions at its core.

Postscript: Some Provocations

Does “everyone knows” ever actually obtain?

The entire formal apparatus starts with a deceptively simple base case: everyone knows p. The professor announces she’s from Maine, and the model says: everyone knows it. But does everyone actually know? What if someone in the back of the room couldn’t hear clearly? What if a student walked in thirty seconds late? In practice, “everyone knows p” is already an idealization at level 1. The formal framework treats it as a binary, either everyone knows or they don’t, but real epistemic situations are noisy and partial. If the very first step of the model doesn’t cleanly obtain in real life, what does that mean for everything built on top of it?

Who counts as being “in” the shared environment?

The Shared Environment account says that when E obtains, everyone in E knows E. But consider: a blind and deaf person is physically present in the restaurant when the quarreling diners raise their voices. They’re spatially in the environment, but the environment isn’t self-advertising to them. They have no perceptual access to the quarrel. So are they “in” E or not? If the answer is “no, because they lack the relevant perceptual capacities,” then being “in” the shared environment is itself an epistemic condition. The account is quietly filtering for agents with the right kind of sensory access, which means the supposedly situational explanation depends on facts about agents’ cognitive equipment from the very start. How shared is a “shared” environment, really?

Does the idealization to infinity actually idealize anything?

When the psychological impossibility objection comes up (humans can’t iterate infinitely), the standard move is to retreat to idealization: strip away cognitive limits and ask what would hold in principle. But idealizations are supposed to bear some continuous relationship to reality. When a physicist posits a frictionless plane, real planes approximate the ideal as friction decreases. The relationship is smooth. With mutual knowledge, there’s no analogous convergence. Lederman’s own induction shows this: level 3 is no more public than level 2, level 7 is no more public than level 6. Adding another layer never gets you closer to publicness. The finite levels don’t converge on anything. So what exactly is the idealization to infinity tracking? If the finite cases don’t approximate the limit, is it still an idealization, or is it just a stipulation?

Why do the thought experiments have to be so contrived?

The scenarios that separate mutual knowledge from publicness all involve a professor whispering elaborate layered lies to individual students. These are wildly unrealistic. But that’s not a complaint about the methodology; it’s actually evidence for something important. In real life, mutual knowledge and publicness almost never come apart. The situations that produce mutual knowledge in practice are almost always shared environments: classrooms, conversations, public spaces. The only way to pry the two apart is through bizarre private deception scenarios that no one ever actually encounters. If the formal distinction between finite mutual knowledge and common knowledge only matters under conditions that never arise in the real world, what is the distinction actually doing for us?